Meet Nightcrawler: My First Autonomous Agent

How I built an autonomous AI agent on a 2GB LXC container in my homelab, complete with an X-Men persona and a subscription-based LLM strategy.

Part 3 of the series: Building The Hub

There’s a moment in every side project where it stops being a toy and starts feeling like a system. For The Hub, that moment was February 8th, 2026 — the day I deployed Nightcrawler.

The idea

I’d been building The Hub as an Obsidian-based knowledge system for a while, but it was passive. A well-organized vault, sure, but it didn’t do anything on its own. I wanted an agent that could read my notes, understand context, and have conversations with me. Not a chatbot — an agent that actually knows my projects, my preferences, and what I’m working on.

So I sat down at 16:30 on a Sunday and started building.

Why “Nightcrawler”?

The Hub borrows its name from S.H.I.E.L.D. — the idea of a central intelligence system coordinating specialized agents. The agents themselves get X-Men codenames. Nightcrawler — the teleporting mutant with the German accent — felt right for an agent that could appear anywhere in the system, poking into different contexts.

There’s a fun detail: the comic book Nightcrawler is German, so this agent addresses me as “Mein Freund.” It’s a small thing, but it gives the interactions personality. When you’re debugging at midnight and your agent greets you with “Guten Abend, Mein Freund,” it makes the whole experience feel less like talking to a tool and more like working with a teammate.

The architecture

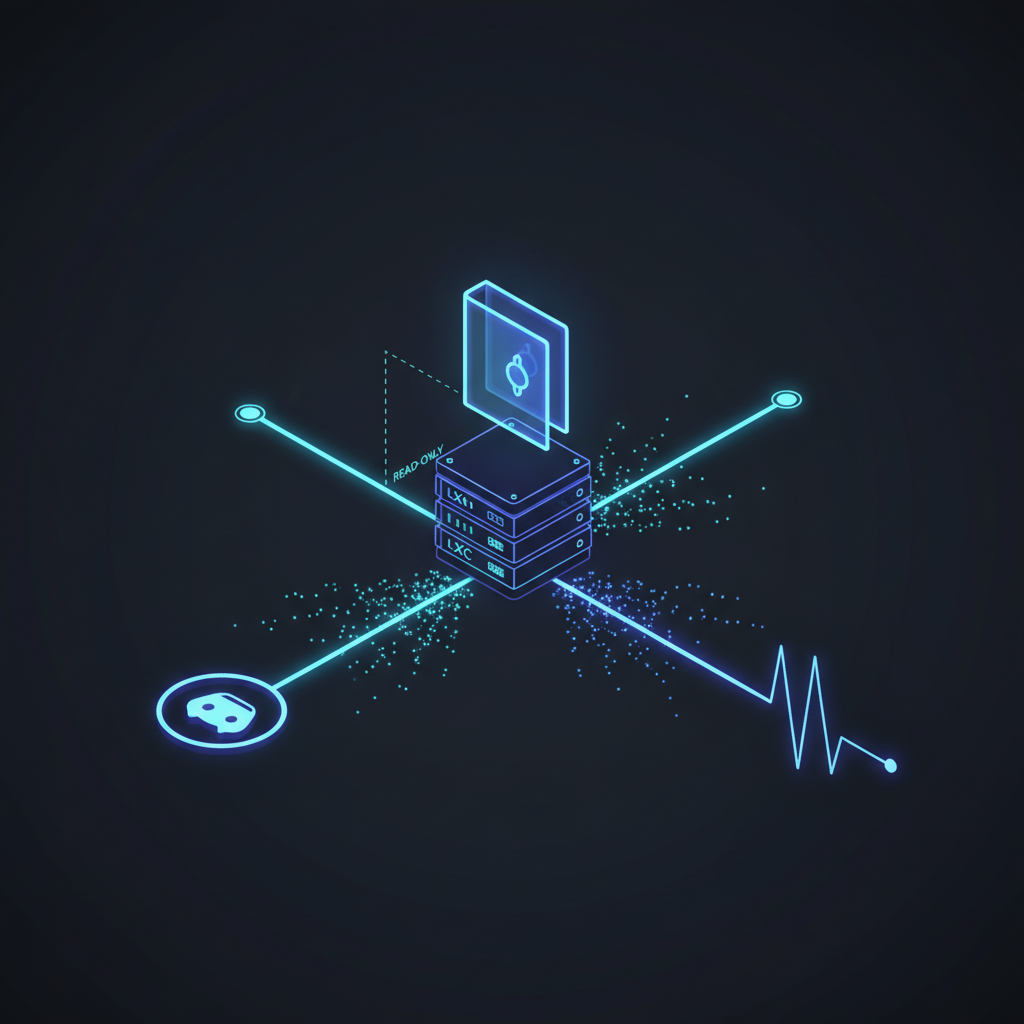

Nightcrawler runs on a dedicated container on my Proxmox server (codename: Cybertron). It’s a modest setup — Ubuntu 24.04, 2 CPU cores, 2 GB of RAM. The stack is Node.js with two distinct operational tracks:

Track 1: Discord Chat Bot

The message flow is straightforward: incoming Discord message, filter (ignore bots, check channel), build context from The Hub, send to LLM via CLI, post response back. Discord was the obvious first choice for an interface — it’s free, it’s flexible, and I already have it open all day.

Track 2: Heartbeat Monitor

A systemd timer fires every 15 minutes. Nightcrawler checks the state of The Hub — new files, recent changes, pending tasks — and can proactively surface things. Think of it as a background daemon for your knowledge base.

CLI over API: the subscription play

Here’s a design decision that raised some eyebrows when I mentioned it to other developers: Nightcrawler calls Claude and Gemini via their CLI tools, not their APIs.

Why? I already pay for Claude Pro and Gemini Advanced subscriptions. Those subscriptions include generous usage through their respective CLIs. If I used the API directly, I’d be paying per token on top of my existing subscriptions. For a personal project running on a homelab, the CLI approach means my AI costs are effectively zero beyond what I’m already paying.

The command looks something like:

claude --max-turns 1 --print "$(cat context.md)" "$(cat user-message.txt)"It’s not elegant, but it works. And when you’re running an agent 24/7, “works without additional cost” beats “elegant but expensive.”

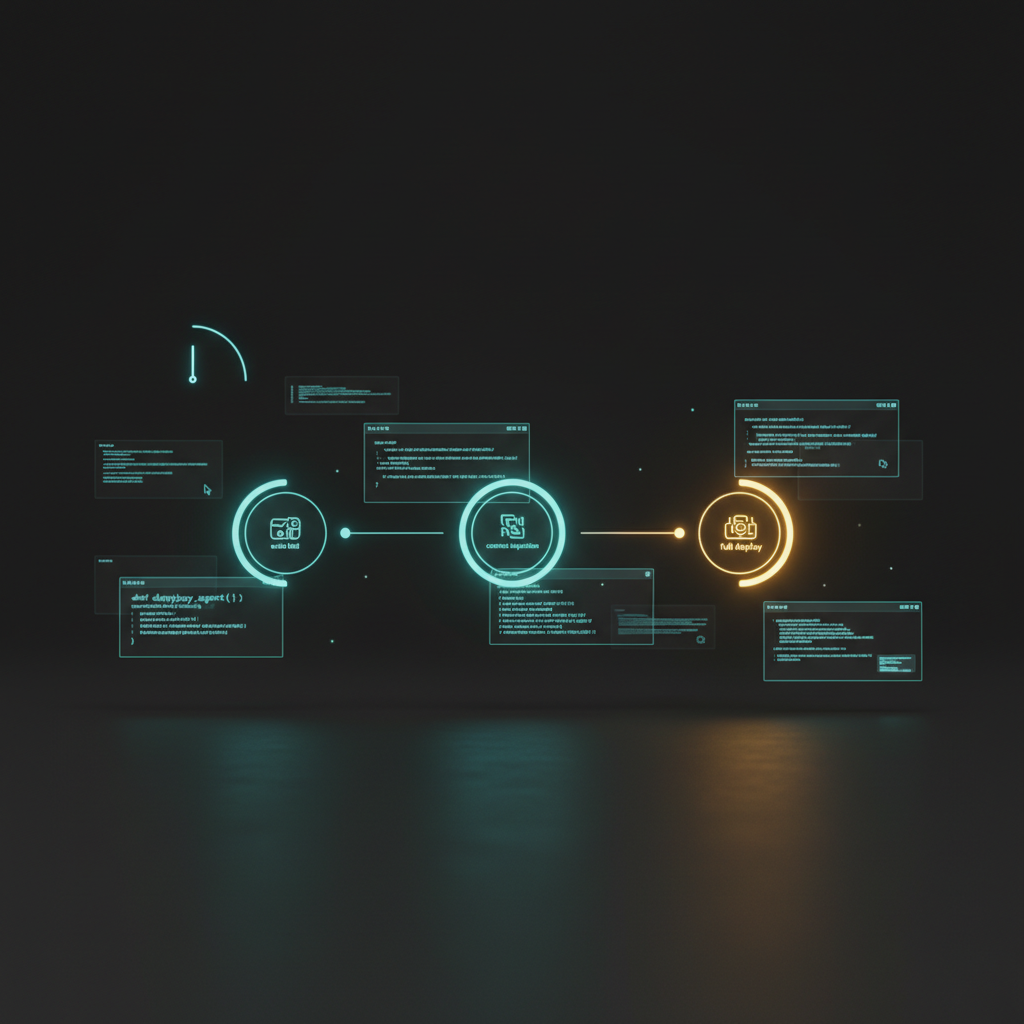

The build session

The whole thing came together in about three hours. By 18:00, I had a basic echo bot working on Discord — Phase 1, just proving the pipeline. By 19:30, Phase 3 was live: full Hub context injection, Claude as the LLM provider, deployed to the container with a proper systemd service.

Along the way, I hit the kinds of bugs that make you question your life choices:

ES module load order. I was using dotenv in an ES module project, but the config wasn’t loaded before other modules tried to read environment variables. Classic Node.js timing issue — the fix was moving the dotenv.config() call to the very top of the entry point, before any other imports.

Claude’s agent mode. Without --max-turns 1, Claude would sometimes enter agent mode and try to use tools, which isn’t what you want when you’re piping output back to Discord. One flag fixed it, but it took a few confusing responses to figure out why the agent was trying to read files instead of answering questions.

Non-zero exit codes. Claude CLI returns non-zero exit codes for certain responses that aren’t errors from the application’s perspective. My error handling was treating these as failures and retrying, which led to duplicate messages. The fix was to check for actual error conditions rather than relying on exit codes alone.

Gemini to Claude

I initially tried Gemini as the LLM provider. It worked, but Claude handled the structured system prompts much better. When you’re injecting a persona file, project context, and conversation history into a prompt, the model needs to reliably follow the layered instructions. Claude was noticeably better at maintaining Nightcrawler’s personality while still giving useful technical answers.

That said, Gemini stays in the stack as a fallback. If Claude’s CLI is down or rate-limited, Nightcrawler can switch providers without any architectural changes.

Design principles

Three principles guided the build:

-

Hub as read-only context. Nightcrawler reads The Hub via a git clone but never writes to it directly. This keeps the knowledge base clean and version-controlled. Any output goes through a separate pipeline.

-

Discord as interface. Free, cross-platform, supports rich embeds, has a mature bot API. Building a custom UI would have been premature — I needed to validate the agent concept first.

-

CLI over API. Use what you’re already paying for. Subscription-based AI access through CLI tools keeps the marginal cost at zero.

What I learned

Building Nightcrawler taught me that the hard part of an AI agent isn’t the AI — it’s the plumbing. The LLM call is maybe 10% of the code. The rest is context assembly, error handling, service management, and making sure things restart cleanly when they inevitably crash at 3 AM.

It also taught me that personality matters more than I expected. A generic “AI assistant” feels like a tool. An agent with a name, a greeting style, and consistent behavior feels like part of the system. That distinction matters when you’re building something you’ll interact with every day.

Nightcrawler was just the beginning. Within weeks, I’d be running three agents on the same container, building a custom chat interface, and setting up cross-agent communication.

For now: Nightcrawler was alive, running on 2 GB of RAM in my basement, and greeting me with “Mein Freund.” The Hub had its first agent.