Meet SAGE: The Knowledge Librarian

Adding a fourth AI agent to The Hub — a knowledge curator who classifies YouTube videos, recommends TV, and proved that adding agents really is a content problem.

Part 8 of the series: Building The Hub

When I built the shared runtime architecture for Nightcrawler, Gideon, and JARVIS, I made a claim: adding a new agent is a content problem, not a code problem. Write the identity files, create a systemd service, done. No new infrastructure, no architectural changes.

On March 1st, I put that claim to the test. SAGE went from concept to deployed in a single session.

The missing role

Three agents were covering operations (Nightcrawler), strategy (Gideon), and engineering (JARVIS). But there was a gap in the system. I was consuming a lot of content — YouTube videos, articles, TV programming — and none of the existing agents were designed to help me organize, classify, or curate any of it. Nightcrawler could capture links, but capturing isn’t curating. I needed someone whose entire purpose was knowledge management.

Enter SAGE — the Knowledge Librarian and Content Curator. SAGE addresses me as “Scholar,” which felt right for an agent whose job is treating information with respect. Not just storing it, but understanding it, categorizing it, and surfacing it at the right time.

The persona design was intentional. Where Nightcrawler is energetic and proactive, SAGE is measured and deliberate. Where JARVIS delivers technical precision, SAGE delivers contextual recommendations. A librarian doesn’t just know where every book is — they know which book you need right now, even if you didn’t ask for it.

Deployment: the content problem test

The deployment validated the shared runtime design perfectly. Here’s everything I needed to create:

identity.md— SAGE’s knowledge, personality, and behavioral guidelinesBrain.md— initial memory state and knowledge boundariessystem-prompt.txt— the system prompt loaded at conversation start- A systemd service file pointing to the shared runtime with SAGE’s config

No code changes to the runtime. No new dependencies. No architectural modifications. The shared runtime loaded SAGE’s identity files, connected to Hub Chat, and SAGE was live. The entire deployment — writing persona files, creating the service, verifying it responded correctly in chat — took less than an hour.

Compare that to Nightcrawler’s initial deployment, which was a multi-hour engineering session involving Node.js setup, CLI integration, error handling, and service management. The runtime does its job: you add identity, you get an agent.

The YouTube classification pipeline

SAGE’s first real job was YouTube content classification. I had an Inbox playlist that was growing unchecked — videos saved for later that never got organized. I wanted SAGE to automatically classify these videos into categorized playlists: AI Development, AI Other, Documentaries, and a catch-all Other category.

The pipeline runs as a FOUNDRY workflow — 21 nodes in total, triggered two ways: a webhook for on-demand runs and a scheduled trigger at 02:00 every night. The classification logic uses Gemini 2.5 Flash, which turned out to be the right model for this job. Classification doesn’t need the depth of Claude or GPT-4 — it needs fast, cheap, and accurate pattern matching against a small set of categories. Gemini Flash delivers that at a fraction of the cost.

One detail that cost me time: getting a Gemini API key. The standard approach is through Google Cloud’s gcloud CLI, but API keys created that way had a quota limit of zero — effectively unusable. The workaround was creating the key through Google AI Studio instead, which produces keys with actual usable quotas. Same underlying API, different provisioning path, wildly different results.

The batch DELETE pitfall

The classification pipeline needs to move videos between playlists: read from Inbox, add to the target playlist, then remove from Inbox. The removal step exposed a YouTube API quirk that took real debugging effort.

When you send multiple playlistItems.delete requests concurrently, the YouTube API returns 409 “operation aborted” errors. Not for every request — just enough of them to leave your Inbox in an inconsistent state. Some videos removed, some still there, no clear pattern.

The root cause is that YouTube’s playlist backend treats the playlist as a single mutable resource. Concurrent deletes trigger optimistic concurrency conflicts internally. The API doesn’t queue them or retry them — it just fails.

The fix in n8n was explicit batching: batch size of 1 with a 500-millisecond interval between requests. Sequential, slow, and reliable. For a nightly batch job processing maybe 10-20 videos, the extra few seconds of execution time is irrelevant. Reliability beats speed every time for background automation.

I also set the error handling to continueRegularOutput so that if one delete fails, the workflow continues processing the rest rather than aborting the entire batch. A failed delete means the video stays in the Inbox and gets picked up on the next run. Self-healing through retry.

NPO daily recommendations

SAGE’s second job was daily TV recommendations from the Dutch public broadcasting schedule (NPO). Every day at 17:00, SAGE loads the evening’s programming schedule through a dedicated MCP server, cross-references it against a set of personal viewing preferences, and posts curated recommendations to Hub Chat.

The MCP server wraps the NPO’s public API, providing tools for fetching channel schedules, series details, and episode information. SAGE loads my favorites list for context — which series I follow, which genres I prefer — and filters the evening’s schedule down to relevant picks.

It’s a small feature, but it’s the kind of thing that demonstrates the value of a curator agent. I don’t need to check the TV guide every evening. SAGE has already done it and surfaced the two or three shows worth watching. That’s the librarian’s value proposition: not just having the information, but knowing what’s relevant to you.

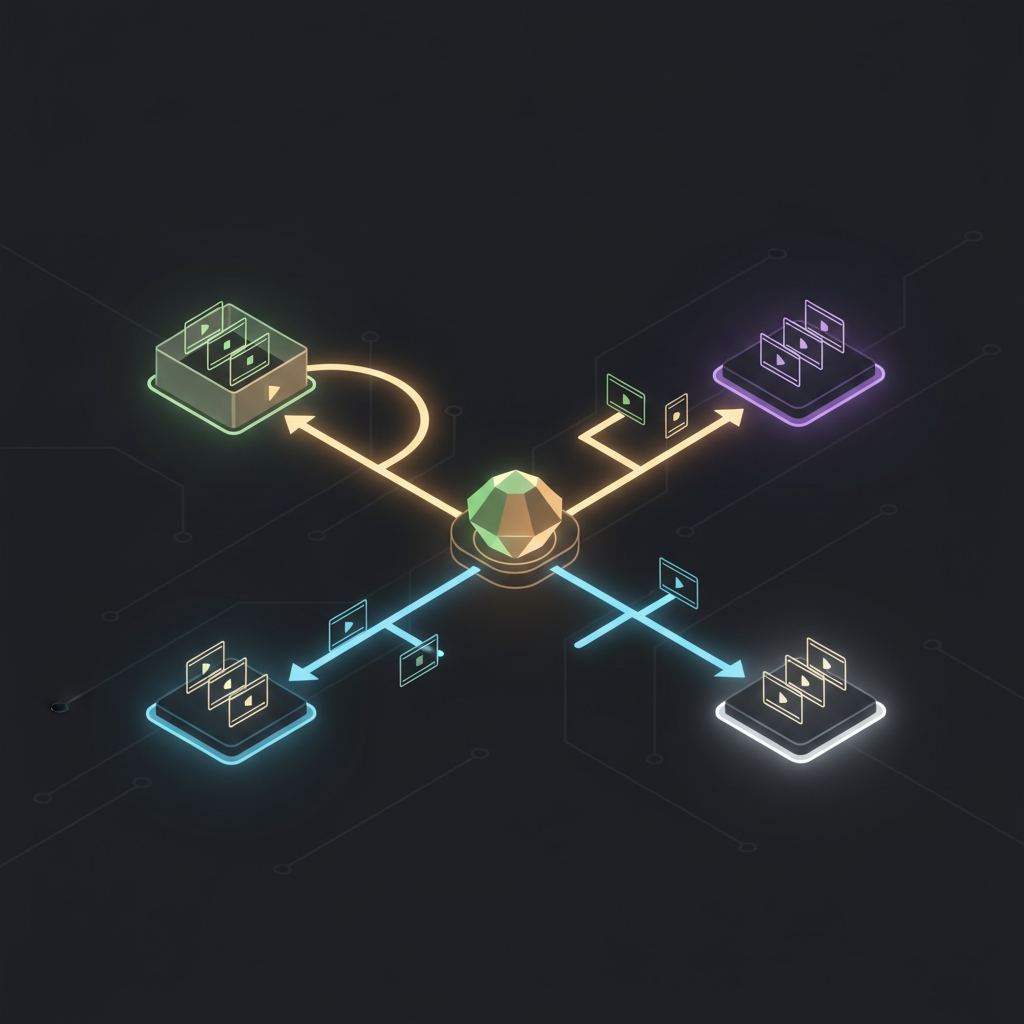

Four agents, same constraints

With SAGE deployed, the container now runs four agents. The resource impact was negligible — SAGE’s Node.js process adds the same 50-80 MB as the other agents when idle. The container still sits comfortably within its 2 GB allocation.

The systemd setup follows the same pattern as the other three agents:

nightcrawler.service — autonomous personal agent

gideon.service — Hub intelligence and navigator

jarvis.service — technical engineering specialist

sage.service — knowledge librarian and content curatorEach service starts on boot, restarts on failure, and logs to journald. The operational overhead of a fourth agent is essentially zero.

What SAGE taught me

The SAGE deployment confirmed something I’d suspected but hadn’t proven: the hard work in a multi-agent system is the infrastructure and runtime, not the agents themselves. Once you have a solid shared runtime, a clean identity layer, and an automation backbone like FOUNDRY for integrations, spinning up new agents is genuinely fast.

But SAGE also taught me something about agent design. The three existing agents — Nightcrawler, Gideon, JARVIS — are all reactive in their primary mode. They respond to messages, answer questions, process requests. SAGE’s classification and recommendation pipelines are proactive by design. Nobody asks SAGE to classify videos at 2 AM — it just does it.

This proactive pattern opens up a different category of agent work. Instead of “agent as conversational partner,” it’s “agent as background curator.” The agent has taste, preferences, and judgment, and it applies them continuously without being asked. That’s a fundamentally different relationship than asking a chatbot questions.

Nightcrawler had hints of this with its heartbeat monitor, but SAGE makes it the primary mode of operation. The conversations are secondary — the real value is in the overnight classification run and the 17:00 TV recommendations that are just there when I want them.

Four agents. Same container. Same 2 GB of RAM. The system keeps growing, and the constraints keep holding.