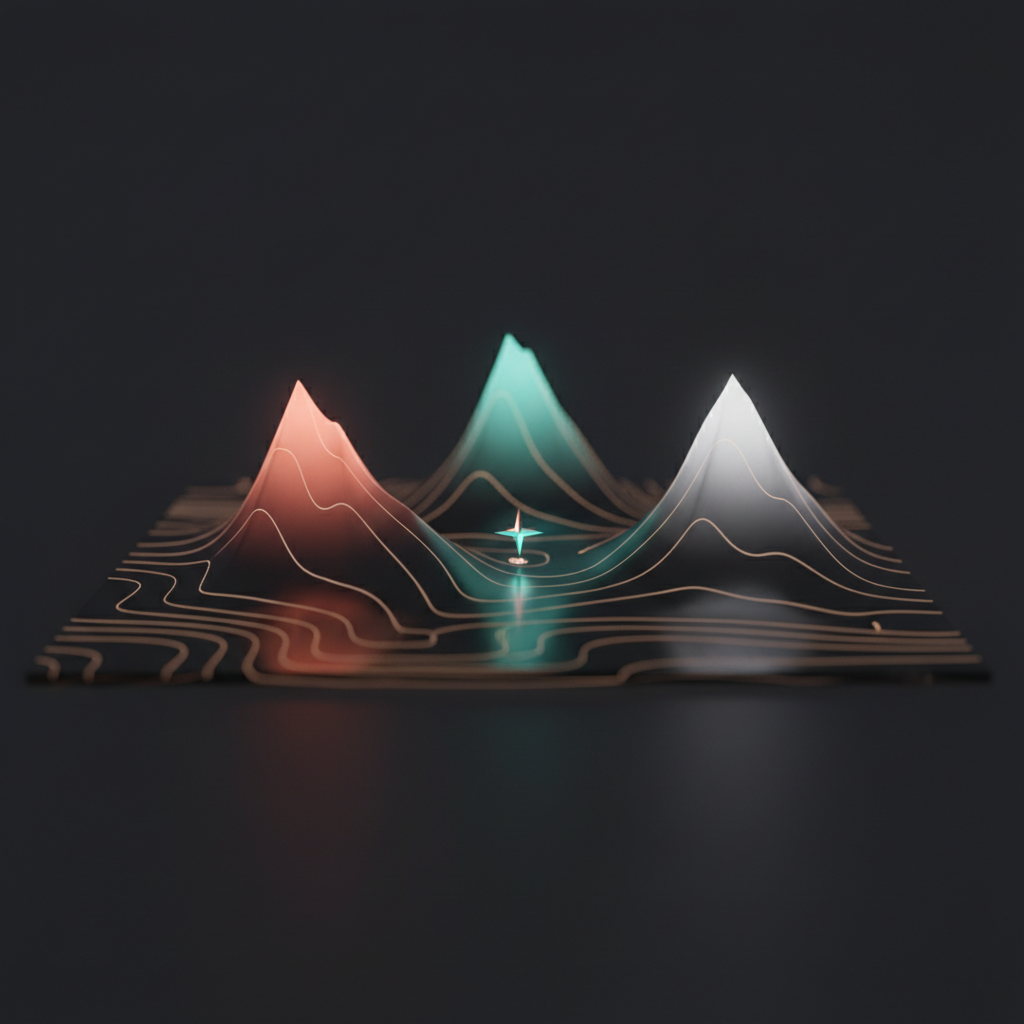

Model Mapping: Finding Your AI Topography

I tested Claude, GPT, and Gemini on identical tasks to build a personal model map. The results surprised me — especially what mattered most.

Weekend two of the AI Resolution had a clear objective: stop guessing which AI model is “the best” and start measuring. I had been using Claude and GPT interchangeably for months, picking whichever tab happened to be open. That is not a strategy. That is laziness.

So I designed a test. Same tasks, multiple models, scored consistently. The goal was not to crown a winner but to build a personal model map — a practical understanding of which model excels at what, for the kind of work I actually do.

The lineup

I tested six models across three categories:

- Claude Opus 4.5 — Anthropic’s flagship, my daily driver at the time

- Claude Sonnet 4.5 — The faster, lighter Claude

- GPT-5.1 — OpenAI’s latest general-purpose model

- GPT-5.1-Codex — OpenAI’s specialized coding model

- Gemini 3 Pro — Google’s top-tier offering

- Gemini 3 Flash — Google’s speed-optimized model

The test categories

I split the evaluation into three areas that reflect my actual workload:

Development — Code review, architecture suggestions, debugging, refactoring. This is the bread and butter. I write frontend code for a living, so I need models that understand TypeScript, Angular, React, and modern build tooling.

Writing — LinkedIn posts, technical documentation, professional emails. I write more prose than most developers realize, and tone matters. A LinkedIn post that sounds like it was written by a corporate chatbot is worse than no post at all.

Research — Technical research, decision support, tool comparisons. When I need to evaluate a library or make an architectural decision, I want structured analysis with trade-offs, not a sales pitch.

The LinkedIn post test

This one was the highlight. I asked each model to write a LinkedIn post announcing The Hub — my new second brain project with the S.H.I.E.L.D. theme. Same prompt, same context, same constraints.

The results were revealing:

GPT-5.1 nailed the instruction following. Every constraint I specified — length, tone, structure, the Marvel reference — showed up in the output exactly as requested. It was technically perfect. It also read like a GPT-5.1 post, which is a problem if your audience has developed pattern recognition for AI-generated LinkedIn content.

Gemini 3 Pro over-structured the response. It produced something that looked like a product announcement with bullet points, headers, and a call-to-action that felt like it belonged on a landing page. Technically competent, but it missed the brief. I wanted conversational, not corporate.

Claude Opus 4.5 matched my voice the closest. It captured the casual-but-technical tone I use when writing about side projects. The sentences had the right rhythm, the observations felt genuine, and it understood that a LinkedIn post about a personal project should feel personal. However — and this was interesting — it dropped the Marvel theme entirely. The S.H.I.E.L.D. reference was in the prompt, but Opus decided it was not worth including.

The winner? It depends on what you value. Opus for tone and voice matching. GPT for strict instruction adherence. Neither was perfect, which is exactly the point of building a model map instead of picking a favorite.

Documentation generation

For the technical writing test, I asked four models — Gemini Pro, Gemini Flash, Claude Sonnet, and Claude Opus — to generate developer documentation for a component. Same input, same format requirements.

The surprise: they all scored 4 out of 5 on accuracy and style. The documentation quality has largely converged across models. When you give a model clear technical input and a format to follow, they all produce usable output.

The differentiator was speed. Sonnet and Flash were dramatically faster — finishing in seconds where Opus and GPT took significantly longer. For documentation tasks where the quality ceiling is the same, speed wins. I do not need to wait thirty seconds for documentation that I am going to review and edit anyway.

This was a useful insight: for tasks where quality is roughly equivalent across models, optimize for speed and cost, not for marginal quality improvements.

The development tests

Code review was more nuanced. I fed each model the same pull request and asked for a review.

Opus consistently caught the most subtle issues — things like potential race conditions and implicit type coercions that other models glossed over. GPT-5.1-Codex was excellent at suggesting concrete refactors with working code. Gemini tended to focus on style and convention adherence, which is useful but less critical.

For debugging, I found that the conversation style matters more than the model. Being able to iteratively share context — “here is the error, here is the relevant code, here is what I have tried” — works better in some interfaces than others.

The real insight: interface matters

Here is what I did not expect to be the biggest takeaway: the interface matters as much as the model quality.

I tested these models through web UIs, APIs, and CLI tools. The same model felt different depending on how I accessed it. Claude through Claude Code (the CLI) felt more integrated into my workflow than Claude through the web app. Gemini through the Gemini CLI was faster to iterate with than through AI Studio.

The reason is context switching. When I am in a terminal writing code, opening a browser tab to paste code into a chat window is a workflow interruption. A CLI tool that lives in the same environment as my code removes that friction. The model behind the CLI could be five percent worse and still feel better because I am not breaking my flow.

This is why I gravitated toward CLI-based AI tools for development work. Not because the models are different — the underlying model is often the same — but because the interface removes friction.

Building the map

I used the AIDB Model Map community tool at aidbmodelmap.com to structure my findings. It gives you a framework for recording model performance across tasks, which is more useful than keeping notes in your head.

My personal map, simplified:

- Code review and architecture — Claude Opus. Catches nuance, understands broader system implications.

- Quick code generation and refactoring — GPT-5.1-Codex or Claude Sonnet. Fast, accurate, good enough for code I will review anyway.

- Writing in my voice — Claude Opus. Best at matching tone and making output feel human.

- Instruction-heavy tasks — GPT-5.1. When the prompt has twelve constraints and all of them matter, GPT follows directions most reliably.

- Documentation and boilerplate — Whichever is fastest. Sonnet or Flash. Quality is equivalent; speed is the differentiator.

- Research and analysis — Model matters less than tool. Perplexity and specialized research tools outperform raw model chat for this.

There is no best model

The conclusion is unsatisfying if you want a simple answer: there is no single best model. Different models excel at different tasks, and your personal map will look different from mine because you do different work.

But that is actually a useful conclusion. It means you should stop asking “which AI model should I use?” and start asking “which AI model should I use for this specific task?” The answer changes depending on whether you are debugging a race condition, writing a blog post, or generating API documentation.

Build your own map. Test the models on your actual workload, not on benchmarks designed by the model providers. You will be surprised by what you find.

The next weekend, I went deeper on research tools — and discovered that deep research might be the most underrated AI capability available right now.